Introduction

TurboQuant, developed by Google Research, represents a significant advancement in the field of AI model compression, particularly for large language models (LLMs). It’s a next-generation compression algorithm designed to drastically reduce the memory footprint of AI models, specifically targeting the Key-Value (KV) cache and vector search operations, without any measurable loss in accuracy. This innovation is poised to make powerful AI models more accessible, enabling on-device “sovereign AI” by making them runnable on significantly smaller hardware, potentially as early as 2026.

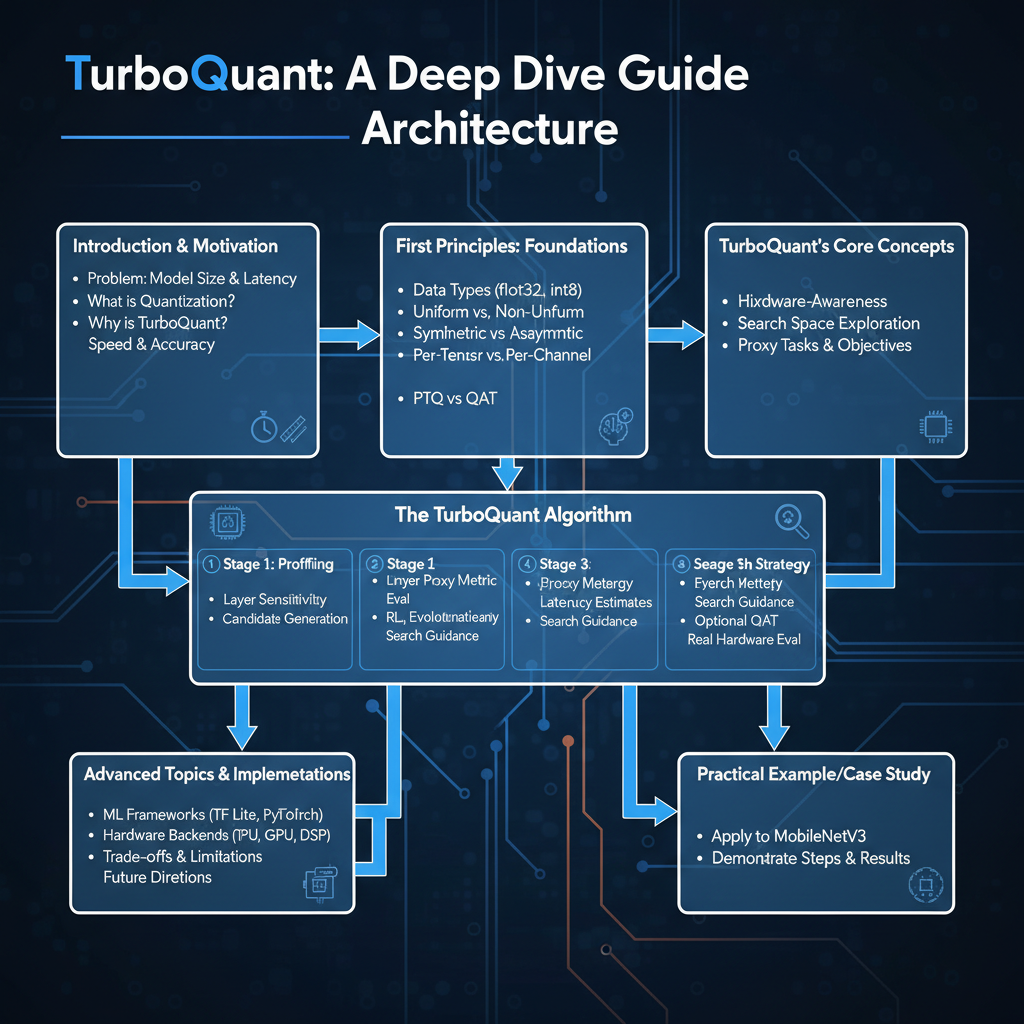

Understanding the internal mechanisms of TurboQuant is crucial for anyone looking to optimize LLM deployments, extend context windows, or develop AI applications for resource-constrained environments like mobile devices. This guide will take you from the fundamental challenges of LLM memory usage to the intricate details of how TurboQuant achieves its unprecedented compression and accuracy.

In this deep dive, you will learn about TurboQuant’s high-level architecture, its step-by-step compression and decompression processes, the core algorithms like PolarQuant and QJL that power it, and how it delivers its impressive performance gains. We will explore its mathematical foundations and provide conceptual code examples to solidify your understanding.

The Problem It Solves

Large Language Models (LLMs) are incredibly powerful but come with substantial computational and memory demands. A significant portion of this demand stems from the Key-Value (KV) cache, also known as “working memory” during inference. In transformer-based LLMs, the attention mechanism requires storing previously computed “keys” and “values” for each token in the input sequence. As the context window (the length of the input sequence) grows, the KV cache scales linearly, consuming vast amounts of GPU memory. This memory bottleneck limits the maximum context length an LLM can process and dictates the hardware requirements for running these models.

Before TurboQuant, existing compression techniques, primarily various forms of quantization (e.g., INT8, INT4), often involved a trade-off: reducing model size and memory usage typically came at the cost of some accuracy degradation. While these methods offered significant practical benefits, achieving “zero accuracy loss” while dramatically shrinking the KV cache remained a major challenge. The core problem TurboQuant addresses is: How can we achieve extreme compression of the LLM KV cache to enable longer context windows and on-device inference, without sacrificing model accuracy or introducing bias?

High-Level Architecture

TurboQuant is not a single compression technique but rather a synergistic combination of two primary components: PolarQuant for the main compression and QJL (Quantization with Johnson-Lindenstrauss) residual correction for maintaining accuracy.

The overall architecture involves compressing the raw Key and Value vectors from the LLM’s attention mechanism into a highly compact representation. This compressed data is then stored in the KV cache. During inference, when attention scores need to be calculated, the compressed vectors are quickly decompressed (or used in their compressed form for inner product estimation) to reconstruct a close approximation of the original vectors, ensuring the attention mechanism operates as intended.

Component Overview:

- TurboQuant Compressor: Takes a high-precision (e.g., FP16 or BF16) Key or Value vector and transforms it into a highly compressed format.

- PolarQuant Encoding: Captures the “main concept” or significant information of the vector using very few bits.

- Residual Calculation: Determines the difference between the original vector and the vector reconstructed from PolarQuant.

- QJL Correction Encoding: Applies a specialized error-checking mechanism to this residual, encoding critical information to eliminate bias.

- Compressed KV Cache: Stores the output from the compressor, which consists of the PolarQuant index, the QJL correction bit(s), and possibly the norm of the residual.

- TurboQuant Decompressor: Takes the compressed data from the KV cache and reconstructs an approximate vector suitable for attention computation.

- PolarQuant Decoding: Reconstructs the main component of the vector from its index.

- QJL Correction Decoding: Reconstructs the residual correction based on the QJL information.

- Vector Reconstruction: Combines the main component and the residual correction to form the final approximate vector.

- Attention Mechanism: Uses the reconstructed (or directly estimated from compressed) KV vectors to compute attention scores, leading to the LLM’s output.

Data Flow: During LLM inference, as new tokens are processed, their Key and Value vectors are generated. These vectors are then passed through the TurboQuant Compressor. The output is stored in the KV cache. When the LLM needs to compute attention for a subsequent token, it retrieves the compressed Keys and Values from the cache, passes them through the TurboQuant Decompressor, and uses the resulting reconstructed vectors to calculate attention scores. This entire process happens with extreme efficiency, leading to significant memory savings and speedups.

How It Works: Step-by-Step Breakdown

TurboQuant operates through a sophisticated two-stage compression and correction process. Let’s break down the QUANTprod (quantization for inner product) and DEQUANTprod (dequantization for inner product) procedures.

Step 1: Input Vector and Bit-width Definition

The process begins with an input vector x (either a Key or Value vector from an LLM layer) of dimension d. A target bit-width b is specified for the overall compression, which typically refers to the bit-width of the PolarQuant component. For example, TurboQuant often uses 3-bit KV cache compression.

Step 2: PolarQuant for Main Compression (TURBOQUANTmse)

The first stage of compression uses a component referred to as TURBOQUANTmse (likely a Mean Squared Error-optimized quantizer, which is PolarQuant).

- Random Rotation (PolarQuant Specific): Although not explicitly shown in the provided pseudocode snippet, PolarQuant’s core idea is to first rotate the input vector

xrandomly. This rotation aims to make the coordinates of the vector follow a more concentrated distribution (e.g., a Beta distribution), which is easier to quantize efficiently. - Quantization: The rotated vector is then quantized using a method like Lloyd-Max quantizer, specifically optimized for a Beta distribution. This quantizer maps the high-precision vector to a low-bit index

idx. Thisidxrepresents the “main concept” of the vector, capturing most of its information with very few bits (e.g.,b-1bits as per the pseudocode).

# Conceptual Python-like pseudocode for PolarQuant (TURBOQUANTmse)

def TURBOQUANTmse_quantize(vector_x, bit_width_b_minus_1):

# This is a conceptual representation. Actual PolarQuant involves:

# 1. Random rotation of vector_x

# 2. Lloyd-Max quantization tailored for a concentrated distribution (e.g., Beta)

# The output is a low-bit index

idx = perform_polarquant_quantization(vector_x, bit_width_b_minus_1)

return idx

def TURBOQUANTmse_dequantize(idx, bit_width_b_minus_1):

# Reconstructs the main component from the index

reconstructed_vector_mse = perform_polarquant_dequantization(idx, bit_width_b_minus_1)

return reconstructed_vector_mse

Step 3: Residual Vector Calculation

After obtaining the main compressed representation (idx), an initial reconstruction of the vector, DEQUANTmse(idx), is performed. The difference between the original input vector x and this initial reconstruction is calculated to form the residual vector r. This residual r contains the information that PolarQuant couldn’t capture, representing the “error” or “noise.”

# From Algorithm 2:

# ...

# 5: idx <- QUANTmse(x)

# 6: r <- x - DEQUANTmse(idx) {residual vector}

# ...

Step 4: QJL Correction on Residual Vector

To address the information lost in the residual, TurboQuant employs QJL (Quantization with Johnson-Lindenstrauss).

- Random Projection: A random projection matrix

S(with i.i.d. entries from N(0, 1)) of sized x dis generated. This matrix is used to project the residual vectorr. - Sign Extraction: The sign of the projected residual vector

S * ris taken, resulting in a binary vectorqjl. Thisqjlvector essentially acts as an error-checking mechanism. It’s a 1-bit residual correction, as described in theturboquant.netsource, designed to capture crucial directional information from the residual.

# From Algorithm 2:

# ...

# 3: Generate a random projection matrix S in R^dxd with i.i.d. entries Si,j ~ N(0, 1)

# ...

# 7: qjl <- sign (S * r) {QJL on residual vector}

# ...

Step 5: Storing Compressed Data

The compressed representation of the original vector x consists of three components:

idx: The low-bit index fromTURBOQUANTmse(PolarQuant).qjl: The sign vector from the QJL correction.||r||^2: The squared L2 norm (magnitude) of the residual vectorr. This norm (gammain the dequantization step) is essential for scaling the QJL correction during reconstruction.

These three components together form the compact representation stored in the KV cache.

Step 6: Decompression and Reconstruction (DEQUANTprod)

During the attention computation, these compressed components are retrieved from the KV cache and used to reconstruct an approximate vector.

- PolarQuant Dequantization: The

idxis passed toDEQUANTmse(idx)to reconstruct the main component of the vector, denoted asx_mse_hat. - QJL Dequantization: The

qjlvector and the residual normgammaare used with the random projection matrixSto reconstruct the QJL correction component,x_qjl_hat. The formula involvessqrt(pi/2d) * gamma * S^T * qjl. - Final Reconstruction: The final reconstructed vector

x_hatis the sum ofx_mse_hatandx_qjl_hat. Thisx_hatis mathematically identical to full-precision models in terms of attention scores, thanks to the bias elimination property of QJL.

# From Algorithm 2:

# Procedure DEQUANTprod(idx, qjl, gamma)

# 10: x_mse_hat <- DEQUANTmse(idx)

# 11: x_qjl_hat <- sqrt(pi/2d) * gamma * S^T * qjl

# 12: output: x_mse_hat + x_qjl_hat

This reconstructed vector x_hat is then used directly in the attention mechanism to calculate similarity scores between keys and queries, and to weigh values.

Deep Dive: Internal Mechanisms

TurboQuant’s effectiveness stems from the clever interplay of PolarQuant and QJL, each addressing a specific aspect of efficient and accurate vector compression.

Mechanism 1: PolarQuant (TURBOQUANTmse)

PolarQuant is the primary compression mechanism, responsible for capturing the bulk of a vector’s information with minimal bits.

- Principle: The core idea is that by applying a random rotation to a high-dimensional vector, its coordinates tend to become more concentrated around zero, following a distribution that is more amenable to efficient quantization. The

turboquant.netsource specifically mentions that PolarQuant “rotates the vector randomly so coordinates follow a concentrated distribution that is easy to quantize.” - Quantization Strategy: Once rotated, the vector’s coordinates are quantized using a technique like Lloyd-Max quantization. This is an iterative algorithm that finds optimal quantization levels (centroids) and decision boundaries for a given probability distribution to minimize the mean squared error (MSE) of quantization. TurboQuant often uses a Beta distribution as the target distribution for this quantization, as it is well-suited for concentrated, bounded data.

- Output: The output of PolarQuant is a low-bit index (

idx) that points to a specific centroid in the quantizer’s codebook. This index is a highly compact representation of the original vector’s main direction and magnitude. Theb-1bit-width mentioned in the ICLR paper forTURBOQUANTmseindicates a very aggressive compression here.

Mechanism 2: QJL Error-Checking Bit (Residual Correction)

This is the crucial component that differentiates TurboQuant from other quantization methods by enabling “zero accuracy loss.”

- Addressing Quantization Bias: Traditional quantization often introduces bias, especially when vectors are used in inner products (like attention scores). This bias can accumulate and lead to accuracy degradation. QJL specifically targets this.

- Johnson-Lindenstrauss Lemma Connection: The “JL” in QJL likely refers to the Johnson-Lindenstrauss lemma, which states that a set of points in a high-dimensional Euclidean space can be embedded into a much lower-dimensional space such that the distances between the points are approximately preserved. While QJL isn’t a direct dimensionality reduction in the traditional sense here, it leverages random projections (a core idea in JL) to capture critical information about the residual.

- How it Works:

- A random projection matrix

Sis generated. This matrix is applied to the residual vectorr(the error from PolarQuant). - Instead of storing the full projected vector, only the sign of each component of

S * ris stored asqjl. This makesqjla highly compact, typically 1-bit per dimension vector. - During decompression,

qjlis scaled bygamma(the norm of the residual) and multiplied by the transpose of the random projection matrixS^T. Thesqrt(pi/2d)factor is a mathematical constant used to correctly scale this reconstruction.

- A random projection matrix

- Bias Elimination: Google Research’s tests showed that by using the QJL error-checking bit, TurboQuant “can eliminate the bias that usually plagues compressed models, allowing for attention scores that are mathematically identical to full-precision models.” This is the key to its “zero accuracy loss” claim. The QJL component acts as a highly efficient, mathematically grounded correction mechanism for the errors introduced by the aggressive initial PolarQuant compression. It corrects for the directional error and scaling of the residual.

Mechanism 3: KV Cache and Attention Integration

- Memory Reduction: By storing

idx,qjl, andgammainstead of full-precision vectors, TurboQuant achieves at least a 6x reduction in KV cache memory usage. For a 3-bit KV cache, this is a massive saving. - Speedup: The reduced memory footprint leads to faster memory access. More importantly, the attention calculation can leverage the compressed representation. The

DEQUANTprodprocedure is optimized for inner product estimation. This means that the decompression and inner product calculation for attention can be highly optimized, leading to up to 8x speedups in attention computation (reported for 4-bit mode). - Software-only Implementation: The efficiency of TurboQuant means that even with software-only implementations, it can enable very long context windows (e.g., 32K+ tokens) on devices like smartphones, making on-device AI a reality.

Hands-On Example: Building a Mini Version

Let’s illustrate the core QUANTprod and DEQUANTprod procedures using Python-like pseudocode, directly inspired by the ICLR 2026 paper’s Algorithm 2. This simplified example focuses on the mathematical operations involved.

import numpy as np

# --- Configuration ---

d = 128 # Dimension of the vector (e.g., hidden dimension of an LLM)

b = 3 # Bit-width for PolarQuant (TURBOQUANTmse)

# For simplicity, we'll use a placeholder for TURBOQUANTmse_quantize/dequantize

# In a real system, these would be sophisticated Lloyd-Max quantizers.

# --- Global Components (pre-generated) ---

# S: Random projection matrix for QJL

# In a real scenario, S would be fixed and known to both quantizer and dequantizer.

S = np.random.randn(d, d) # i.i.d entries ~ N(0, 1)

# --- Placeholder for TURBOQUANTmse (PolarQuant) ---

# In a real implementation, this would involve random rotation,

# and a Lloyd-Max quantizer trained on a Beta distribution.

# For this mini example, we'll simulate it by simply quantizing to a few discrete levels

# and adding some noise to represent the 'residual'.

_centroids = np.linspace(-1, 1, 2**(b-1)) # Example centroids for b-1 bits

def _TURBOQUANTmse_quantize_simple(x_vec):

"""

Simplified PolarQuant (TURBOQUANTmse) for demonstration.

Finds the closest centroid and returns its index.

"""

# Simulate a simple scalar quantization across dimensions

# In reality, this is a vector quantizer.

quantized_x = np.mean(x_vec)

closest_centroid_idx = np.argmin(np.abs(_centroids - quantized_x))

return closest_centroid_idx

def _TURBOQUANTmse_dequantize_simple(idx):

"""

Simplified PolarQuant (TURBOQUANTmse) dequantization.

Returns a vector where all elements are the centroid value.

"""

# In reality, this would reconstruct a full vector from the centroid.

return np.full(d, _centroids[idx])

# --- TURBOQUANTprod: Optimized for Inner Product (Compression) ---

def TURBOQUANTprod_quantize(x_vec):

"""

Compresses an input vector x_vec using TurboQuant.

Input:

x_vec: The original high-precision vector (e.g., Key or Value vector)

Output:

A tuple (idx, qjl, residual_norm_sq) representing the compressed vector.

"""

# Step 1: PolarQuant (TURBOQUANTmse) for main compression

idx = _TURBOQUANTmse_quantize_simple(x_vec)

# Step 2: Initial reconstruction from PolarQuant

x_mse_hat = _TURBOQUANTmse_dequantize_simple(idx)

# Step 3: Calculate residual vector

r_vec = x_vec - x_mse_hat

# Step 4: QJL on residual vector

# Project residual with random matrix S

s_dot_r = np.dot(S, r_vec)

# Take the sign of the projected residual

qjl = np.sign(s_dot_r)

# Store the squared L2 norm of the residual

residual_norm_sq = np.sum(r_vec**2) # This is gamma in DEQUANTprod

return idx, qjl, residual_norm_sq

# --- DEQUANTprod: Optimized for Inner Product (Decompression) ---

def TURBOQUANTprod_dequantize(idx, qjl, gamma_sq_norm):

"""

Decompresses a TurboQuant-compressed representation back into a vector.

Input:

idx: PolarQuant index

qjl: QJL sign vector

gamma_sq_norm: Squared L2 norm of the residual (gamma in the paper refers to ||r||_2)

Output:

reconstructed_x: The approximated vector.

"""

# Step 1: Reconstruct main component from PolarQuant

x_mse_hat = _TURBOQUANTmse_dequantize_simple(idx)

# Step 2: Reconstruct QJL correction component

# gamma is the L2 norm, so we need sqrt(gamma_sq_norm)

gamma = np.sqrt(gamma_sq_norm) if gamma_sq_norm > 0 else 0

# Mathematical constant for QJL reconstruction

qjl_scaling_factor = np.sqrt(np.pi / (2 * d)) * gamma

# Reconstruct QJL component: S_transpose * qjl * scaling_factor

x_qjl_hat = qjl_scaling_factor * np.dot(S.T, qjl)

# Step 3: Combine for final reconstructed vector

reconstructed_x = x_mse_hat + x_qjl_hat

return reconstructed_x

# --- Demonstration ---

print(f"Vector dimension (d): {d}")

print(f"PolarQuant bit-width (b-1): {b-1}")

# Original high-precision vector

original_vector = np.random.rand(d) * 10 - 5 # Example vector between -5 and 5

print("\nOriginal vector (first 5 elements):")

print(original_vector[:5])

# Compress the vector

compressed_idx, compressed_qjl, compressed_gamma_sq = TURBOQUANTprod_quantize(original_vector)

print("\nCompressed components:")

print(f" PolarQuant Index (idx): {compressed_idx}")

print(f" QJL Sign Vector (qjl, first 5 elements): {compressed_qjl[:5]}")

print(f" Residual Squared Norm (gamma_sq): {compressed_gamma_sq}")

# Decompress the vector

reconstructed_vector = TURBOQUANTprod_dequantize(compressed_idx, compressed_qjl, compressed_gamma_sq)

print("\nReconstructed vector (first 5 elements):")

print(reconstructed_vector[:5])

# Calculate reconstruction error (MSE)

mse = np.mean((original_vector - reconstructed_vector)**2)

print(f"\nMean Squared Error between original and reconstructed: {mse:.6f}")

# Note: The 'zero accuracy loss' claim applies to attention scores,

# not necessarily to the direct MSE of the reconstructed vector in isolation.

# The QJL correction specifically eliminates bias in inner product calculations.

This mini example provides a conceptual walkthrough. In a real implementation, _TURBOQUANTmse_quantize_simple and _TURBOQUANTmse_dequantize_simple would be replaced by a highly sophisticated vector quantizer (PolarQuant) that operates on the randomly rotated vector coordinates, using a trained codebook. The S matrix would also be carefully managed, often being a fixed, pre-generated matrix.

Real-World Project Example

While a full open-source implementation of TurboQuant is not yet publicly available (as of March 2026, it’s a Google Research project with ICLR 2026 presentation), we can illustrate how it would conceptually integrate into an existing LLM inference engine like llama.cpp or Ollama, which are designed for local LLM execution. The key is that the KV cache management and attention computation would be modified to use TurboQuant’s QUANTprod and DEQUANTprod.

Let’s imagine an LLM inference pipeline where llama.cpp is processing tokens.

Conceptual Integration into an LLM Inference Engine:

import numpy as np

# Assume TURBOQUANTprod_quantize and TURBOQUANTprod_dequantize are implemented as above

# and S is globally available and consistent.

# --- Mock LLM Components ---

class MockLLMLayer:

def __init__(self, layer_idx, model_dim=128):

self.layer_idx = layer_idx

self.query_proj = np.random.rand(model_dim, model_dim)

self.key_proj = np.random.rand(model_dim, model_dim)

self.value_proj = np.random.rand(model_dim, model_dim)

def forward(self, input_embedding):

# Simulate Q, K, V projections

query = np.dot(input_embedding, self.query_proj)

key = np.dot(input_embedding, self.key_proj)

value = np.dot(input_embedding, self.value_proj)

return query, key, value

# --- Mock KV Cache ---

class KV_Cache:

def __init__(self, num_layers, max_context_len):

self.num_layers = num_layers

self.max_context_len = max_context_len

# Store compressed KV pairs

self.compressed_keys = [[] for _ in range(num_layers)] # List of lists for (idx, qjl, gamma_sq)

self.compressed_values = [[] for _ in range(num_layers)]

def add_token_kv(self, layer_idx, compressed_key, compressed_value):

self.compressed_keys[layer_idx].append(compressed_key)

self.compressed_values[layer_idx].append(compressed_value)

# Handle context window overflow (e.g., remove oldest)

if len(self.compressed_keys[layer_idx]) > self.max_context_len:

self.compressed_keys[layer_idx].pop(0)

self.compressed_values[layer_idx].pop(0)

def get_layer_keys_values(self, layer_idx):

# Decompress all keys/values for the current layer

decompressed_keys = [TURBOQUANTprod_dequantize(*k) for k in self.compressed_keys[layer_idx]]

decompressed_values = [TURBOQUANTprod_dequantize(*v) for v in self.compressed_values[layer_idx]]

return np.array(decompressed_keys), np.array(decompressed_values)

# --- Mock Attention Mechanism ---

def calculate_attention(query, keys, values):

if keys.shape[0] == 0: # No context yet

return np.zeros_like(query)

# Simplified dot-product attention

# query: (model_dim,)

# keys: (seq_len, model_dim)

# values: (seq_len, model_dim)

# Attention scores: (seq_len,)

attention_scores = np.dot(keys, query) / np.sqrt(query.shape[0])

attention_weights = np.exp(attention_scores - np.max(attention_scores)) # Softmax for numerical stability

attention_weights /= np.sum(attention_weights)

# Context vector: (model_dim,)

context_vector = np.dot(attention_weights, values)

return context_vector

# --- LLM Inference Loop with TurboQuant ---

def run_llm_inference_with_turboquant(num_layers, model_dim, max_context_len, input_sequence_embeddings):

llm_layers = [MockLLMLayer(i, model_dim) for i in range(num_layers)]

kv_cache = KV_Cache(num_layers, max_context_len)

output_embeddings = []

for token_idx, input_embedding in enumerate(input_sequence_embeddings):

print(f"\nProcessing token {token_idx + 1}...")

current_embedding = input_embedding

for layer_idx, layer in enumerate(llm_layers):

# 1. Generate Q, K, V for current token

query, key, value = layer.forward(current_embedding)

# 2. Compress Key and Value using TurboQuant

compressed_key = TURBOQUANTprod_quantize(key)

compressed_value = TURBOQUANTprod_quantize(value)

# 3. Store compressed K, V in KV cache

kv_cache.add_token_kv(layer_idx, compressed_key, compressed_value)

# 4. Retrieve and decompress historical K, V for attention

historical_keys, historical_values = kv_cache.get_layer_keys_values(layer_idx)

# 5. Calculate Attention using the (reconstructed) K, V

context_vector = calculate_attention(query, historical_keys, historical_values)

# 6. Simulate combining context with query for next layer input

# (In a real LLM, this would involve more complex operations like residual connections, etc.)

current_embedding = query + context_vector # Simplified next layer input

output_embeddings.append(current_embedding)

return output_embeddings

# --- Setup and Run ---

num_layers = 2

model_dim = 128

max_context_len = 10 # Small context for demonstration

sequence_length = 5 # Number of tokens to process

# Simulate input embeddings for a sequence

input_embeddings = [np.random.rand(model_dim) for _ in range(sequence_length)]

# Run the inference

final_outputs = run_llm_inference_with_turboquant(

num_layers, model_dim, max_context_len, input_embeddings

)

print("\nInference complete. Final output embedding for last token (first 5 elements):")

print(final_outputs[-1][:5])

What to Observe:

- Memory Footprint (Conceptual): In a real system,

kv_cache.compressed_keysandkv_cache.compressed_valueswould store significantly less data than if they were storing full-precisionnp.arrayobjects. For instance,compressed_keyis a tuple(idx, qjl, residual_norm_sq).idxis a single integer,qjlis a vector ofdsigns (which can be packed into very few bits), andresidual_norm_sqis a single float. This is much smaller than ad-dimensional float vector. - Attention Calculation: The

calculate_attentionfunction receiveshistorical_keysandhistorical_valuesthat have been reconstructed byTURBOQUANTprod_dequantize. TurboQuant’s strength lies in ensuring that these reconstructed vectors allow attention scores that are mathematically equivalent to full-precision, eliminating bias. - Performance (Conceptual): While not directly measurable in this pseudocode, the

kv_cache.get_layer_keys_valuesoperation would be much faster if the decompression happens efficiently on specialized hardware or optimized software, as it’s retrieving smaller chunks of data from memory.

Performance & Optimization

TurboQuant delivers compelling performance gains and optimizations primarily by tackling the memory bottleneck of the KV cache:

- Extreme Memory Reduction: The most significant benefit is the drastic reduction in KV cache memory usage. Reported figures indicate “at least 6x” lower memory use, with 3-bit KV cache compression being a key target. This directly translates to:

- Longer Context Windows: More past tokens can be stored in the available memory, enabling LLMs to handle much longer input sequences without running out of VRAM. This is critical for complex tasks requiring extensive context.

- Smaller Hardware Requirements: Models that previously needed high-end GPUs can now run on mid-range or even consumer-grade hardware.

- On-Device AI: The ability to run large models on mobile phones or edge devices becomes a practical reality, fostering “sovereign AI” where data processing happens locally.

- Attention Speedup: Beyond memory, TurboQuant also accelerates the attention mechanism itself. By optimizing the inner product estimation using the compressed representations, it delivers “up to 8x faster attention” (reported for 4-bit mode). This is due to:

- Reduced Data Movement: Less data needs to be fetched from memory, improving memory bandwidth utilization.

- Optimized Decompression/Estimation: The

DEQUANTprodprocedure is designed to be highly efficient for reconstructing vectors specifically for inner product calculations, rather than general-purpose high-fidelity reconstruction.

- Zero Accuracy Loss: This is a critical optimization. Unlike many other quantization methods that trade accuracy for compression, TurboQuant’s QJL component specifically eliminates the bias introduced by compression, ensuring that attention scores are mathematically identical to those computed with full-precision models. This means developers don’t have to compromise model performance for efficiency.

- Trade-offs: While offering immense benefits, the “zero accuracy loss” claim is specific to the attention scores. The reconstructed vectors themselves might not be bit-for-bit identical to the original full-precision vectors, but their properties relevant to the attention mechanism (specifically, their inner products) are preserved without bias. The computational overhead of the compression/decompression steps is negligible compared to the memory and speed benefits.

Common Misconceptions

- “Zero Accuracy Loss” means the reconstructed vector is identical: This is a common misunderstanding. TurboQuant’s “zero accuracy loss” refers specifically to the mathematical equivalence of attention scores compared to full-precision models. The reconstructed vector might not be bit-for-bit identical to the original, but the QJL correction ensures that the bias typically introduced by compression into inner product calculations (which form the basis of attention) is eliminated. This preserves the model’s effective performance.

- It’s just another form of quantization: While TurboQuant involves quantization (PolarQuant), it’s significantly more advanced than simple fixed-point or block-wise quantization. The combination with QJL for residual correction and bias elimination is what makes it a “next-generation” algorithm and distinguishes it from traditional lossy compression methods.

- It compresses the entire model: TurboQuant specifically targets the KV cache and vector search. While it can be combined with other techniques like INT4 quantization for model weights, its primary innovation and impact are on the runtime “working memory” (KV cache) during inference.

- It’s a general-purpose data compression algorithm: TurboQuant is highly specialized for AI model vectors, particularly those used in transformer architectures. Its mechanisms (random rotations for concentrated distributions, QJL for bias-free inner products) are tailored to the mathematical properties of these vectors and their use in attention.

Advanced Topics

- Integration with Weight Quantization: To maximize total compression and efficiency, TurboQuant for the KV cache can be synergistically combined with weight quantization (e.g., INT4 for model weights). This allows for both smaller models on disk and a more compact runtime memory footprint.

- Software-Only Implementations: The design of TurboQuant, particularly its use of mathematical operations that can be efficiently implemented, makes it suitable for software-only deployments. This is crucial for enabling powerful LLMs on devices without dedicated AI accelerators, like standard smartphones.

- Adaptability to Different Architectures: While designed for transformer KV caches, the underlying principles of bias-free inner product estimation could potentially be adapted or inspire similar techniques for other vector-heavy AI tasks or architectures where preserving relative distances or similarity is paramount.

- Hardware Acceleration: Although effective in software, TurboQuant’s operations (matrix multiplications, sign operations, quantization lookups) are highly amenable to hardware acceleration (e.g., on custom AI chips or specialized GPU kernels), which could further boost its performance beyond the reported 8x speedup.

Comparison with Alternatives

Traditional Quantization (e.g., INT8, INT4):

- How it works: Directly maps floating-point numbers to lower-precision integers (e.g., 8-bit, 4-bit) often with a simple scaling factor and offset.

- Trade-offs: Achieves significant memory reduction and speedup. However, it typically introduces some level of accuracy loss and bias, which can degrade model performance, especially for aggressive quantization (like INT4). Requires careful calibration to minimize this impact.

- TurboQuant vs. Traditional: TurboQuant specifically addresses and eliminates this bias for attention scores, ensuring “zero accuracy loss” while achieving comparable or even greater compression ratios for the KV cache.

Sparse Attention/KV Cache Pruning:

- How it works: Instead of compressing, these methods aim to reduce the size of the KV cache by only storing or attending to a subset of tokens (e.g., local attention, windowed attention, or discarding less important tokens).

- Trade-offs: Can achieve memory savings and speedups, but often involves heuristics for determining which tokens to keep, which might lead to information loss or reduced context understanding.

- TurboQuant vs. Pruning: TurboQuant compresses all tokens in the KV cache, preserving the full context, rather than discarding information. It’s a complementary approach; one could potentially combine sparse attention with TurboQuant to achieve even greater efficiencies.

Other Vector Quantization Methods:

- How it works: Various algorithms exist to map high-dimensional vectors to discrete codes (e.g., product quantization, residual quantization).

- Trade-offs: Can be effective for compression, but often face similar challenges to traditional quantization regarding accuracy preservation in specific contexts like attention mechanisms, especially concerning bias in inner products.

- TurboQuant vs. Others: TurboQuant’s novelty lies in its specific combination of PolarQuant (which itself is an advanced vector quantization method tailored for certain distributions) with the QJL residual correction, which is purpose-built to eliminate bias in inner product computations, a critical aspect for LLM attention.

In essence, TurboQuant stands out by offering extreme compression with a mathematically rigorous guarantee of bias elimination for attention scores, a combination that was previously elusive in the field of AI model compression.

Debugging & Inspection Tools

Debugging and inspecting TurboQuant’s internals would primarily involve understanding the transformation of vectors at various stages within an LLM inference pipeline.

Memory Profilers:

- Purpose: To confirm the reduction in KV cache memory usage.

- How to use: Tools like

nvprof(for NVIDIA GPUs),perf(Linux), or custom memory tracking within the inference engine (e.g.,llama.cpp’s internal memory reporting) can show the actual memory footprint of the KV cache with and without TurboQuant. - What to look for: A significant drop (6x or more) in the memory allocated for the KV cache data structures.

Custom Logging and Tracing:

- Purpose: To observe the values of vectors at each stage of compression and decompression.

- How to use: Modify the inference engine’s source code (if accessible) to print or log:

- Original Key/Value vectors (full precision).

- PolarQuant

idxvalues. - Residual vectors

r. - QJL

qjlvectors andgamma(residual norm). - Reconstructed

x_mse_hatandx_qjl_hat. - Final

reconstructed_vector. - The difference (

MSE) between the original and reconstructed vectors.

- What to look for: Verify that

idxandqjlare indeed low-bit representations. Observe the magnitude of the residualrand howx_qjl_hatcorrects for it. Confirm that thereconstructed_vectoris numerically close to theoriginal_vector, especially in terms of inner product similarity with other vectors.

Attention Score Comparison:

- Purpose: To validate the “zero accuracy loss” claim.

- How to use: Run the same LLM inference task:

- With TurboQuant enabled.

- With full-precision KV cache (if possible, or another high-fidelity baseline).

- Capture the attention scores (dot products between queries and keys) at various layers for a given input sequence.

- What to look for: The attention score matrices should be numerically very similar, with minimal to no bias, between the TurboQuant and full-precision runs. This is the ultimate metric for “zero accuracy loss.”

Model Output Evaluation:

- Purpose: To ensure that the internal accuracy preservation translates to downstream task performance.

- How to use: Run the LLM on standard benchmarks (e.g., MMLU, Hellaswag, humaneval) with and without TurboQuant.

- What to look for: The final metrics (accuracy, F1 score, perplexity, etc.) should be virtually identical, indicating that the internal compression does not degrade the model’s overall utility.

Key Takeaways

- Extreme KV Cache Compression: TurboQuant dramatically reduces the memory footprint of the LLM Key-Value cache (at least 6x), enabling longer context windows and running models on smaller hardware.

- Zero Accuracy Loss: Unlike traditional quantization, TurboQuant achieves this compression with “zero accuracy loss” for attention scores, thanks to its sophisticated bias elimination mechanism.

- Two-Stage Approach: It combines PolarQuant (for main, low-bit compression) and QJL (Quantization with Johnson-Lindenstrauss) residual correction (for bias-free accuracy).

- QJL’s Role: QJL uses a 1-bit residual correction based on random projections to eliminate bias in inner product calculations, ensuring attention scores remain mathematically identical to full-precision.

- Performance Boost: The reduced memory and optimized inner product estimation lead to significant speedups in attention computation (up to 8x).

- Enabling On-Device AI: TurboQuant makes powerful LLMs feasible for deployment on resource-constrained edge devices like smartphones, fostering “sovereign AI.”

- Not Just Quantization: It’s a next-generation approach that transcends simple bit-reduction by specifically addressing the unique challenges of preserving accuracy in high-dimensional vector operations critical to LLMs.

This knowledge is particularly useful for AI researchers, MLOps engineers, and developers working on deploying LLMs, especially in environments where memory and computational efficiency are paramount.

References

- TurboQuant Explained: How to Use Google’s Extreme AI Compression with Ollama and llama.cpp

- Google unveils TurboQuant, a new AI memory compression algorithm — and yes, the internet is calling it ‘Pied Piper’ | TechCrunch

- Google Introduces TurboQuant: A New Compression Algorithm that Reduces LLM Key-Value Cache Memory by 6x and Delivers Up to 8x Speedup, All with Zero Accuracy Loss - MarkTechPost

- Published as a conference paper at ICLR 2026 TURBOQUANT:

- TurboQuant - Extreme Compression for AI Efficiency

Transparency Note

The information provided in this guide is based on publicly available research papers, technical articles, and announcements from Google Research and related publications as of March 2026. As an active area of research, specific implementation details and performance benchmarks may evolve. The “Hands-On Example” and “Real-World Project Example” use simplified pseudocode to illustrate core concepts, as a full, runnable open-source implementation of TurboQuant is not yet widely available.